Artificial intelligence has made incredible strides in recent years. Who hasn’t asked Alexa or Siri for advice on the best pizza restaurant in the neighborhood? These and other chatbots, though, have not yet passed the critical Turing Test, which requires AI to fool us into believing we’re talking to a real human. While it hasn’t achieved the ambitious goals of its originators, AI still has a powerful and unseen influence over us. Automated e-commerce product recommendations, credit screening, and résumé scanning are some of the many ways in which machine learning algorithms control our lives – and not always for the better.

◊

Apple’s “intelligent assistant” Siri can multiply 3-digit numbers, tell you the winner of the NBA championship in 1968 (the Boston Celtics), and report the weather in Paris. But is Siri’s software intelligent?

Siri appears to be smart, and performs better than most humans in answering the above questions. However, anyone who’s engaged with it beyond asking easy Web look-up questions such as “what’s the height of the Empire State Building?” (1250 feet ) will soon discover its many limitations.

The artificial intelligence – or AI – technology behind this new generation of consumer digital assistants may have larger problems than providing clueless answers. AI has the potential to mislead its humans with answers that appear logical or scientific, but are just the opposite.

In the ground-breaking movie 2001: A Space Odyssey, director Stanley Kubrick saw the coldly logical HAL 9000 computer as a psychopathic presence. More recently, real scientists have been sounding the alarm. Before he died, physicist Stephen Hawking raised the possibility of AI as a threat to humankind. Silicon Valley titans, such as Elon Musk and others, have expressed ominous warnings as well.

Perhaps their concerns have been exaggerated, but AI’s behind-the-scenes influence – from determining who gets a home mortgage to who’s chosen as a job candidate – deserves far more attention. The power of AI to persuade humans with seemingly definitive answers concerned the original scientists who invented computers and “thinking” software back in the 20th century. Like these early AI pioneers, we are today both frightened and exhilarated by the potential of intelligent machines.

Passing the Turing Test

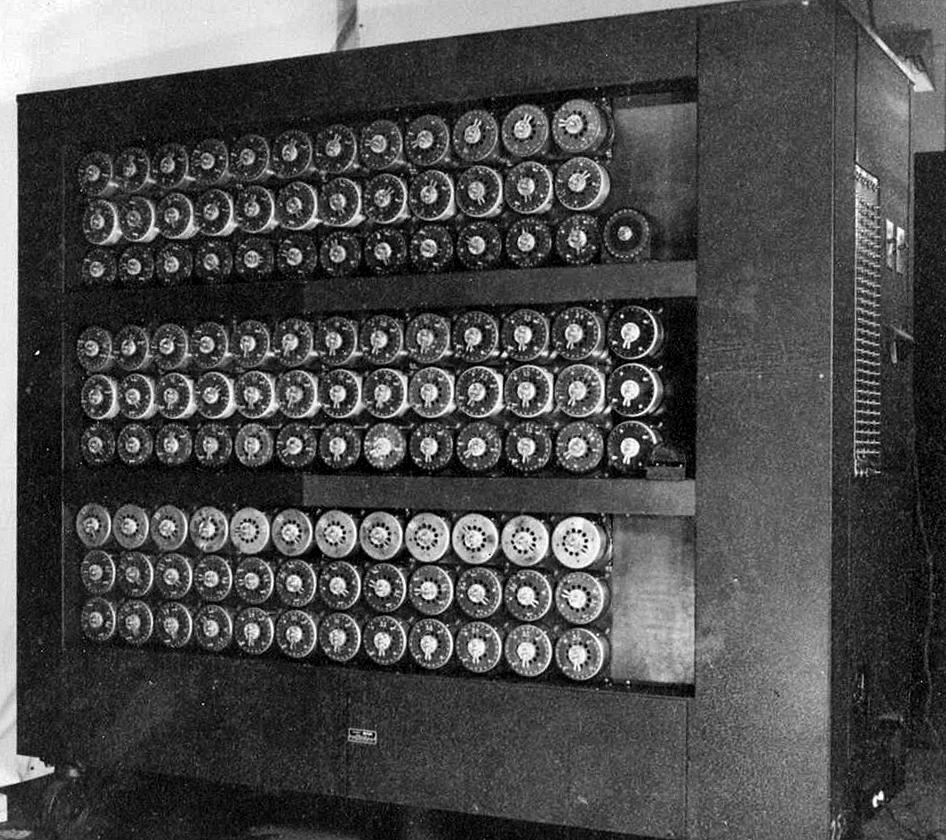

Besides being computer science’s foundational thinker and legendary code breaker, Alan Turing also set the groundwork for artificial intelligence. After World War II, he took a position at the U.K.’s prestigious National Physical Laboratory. While there he published one of the first papers to consider the possibility of computers recreating human intelligence. He observed that an intelligent machine should be able to, in a sense, “educate” itself, like a child being able to learn on its own.

“No, I’m not interested in developing a powerful brain. All I’m after is just a mediocre brain, something like the President of the American Telephone and Telegraph Company.” —Alan Turing

After working on plans for the first digital computer – he was busy! – Turing eventually returned to artificial intelligence. In 1950, he published his seminal “Computing Machinery and Intelligence” (interestingly enough for the British philosophy journal Mind).

In this paper, Turing emphasized that computers would eventually think, though he wasn’t sure exactly how they might achieve this level of consciousness. He focused instead on how we’d know an AI was created, and introduced his “imitation game.” It’s a kind of contest in which human interrogators guess whether the written or verbal communications from an unknown party is human, or instead computer generated. And yes, it’s also the basis for the title of the 2014 movie about Turing.

These days we’re all engaging with chatbots and other virtual assistants, such as Siri or Alexa. While these are impressive software tools, we humans soon realize we’re working with a machine that spews canned answers. For your own amusement, try asking Siri whether “pearls rhymes with earls” or if she “prefers salad before or after dinner.” You’ll confirm very quickly that you’re talking to a machine. However, if Siri could convince you that it was a smart human, it would win the imitation game and pass the Turing Test for machine intelligence.

Alan Turing and his team built a computer, called the bombe, to break German codes during World War II. (Source: Wikimedia Commons)

Turing boldly predicted that by the year 2000, an AI would be able to fool its human testers 30% of the time. No one now believes we’re even close to that goal. However, this has not stopped the Loebner Prize gathering held each year. Since 1991, AI software developers bring their chatbots to have them evaluated by the Loebner judges. The prize is given to the most human-like interactions.

In 2019, Mitsuku won “best overall chatbot” for its conversational abilities. For the grand prize of $100,000, however, a chatbot would have to schmooze well enough to convince all the Loebner judges of its conversational skills and thereby pass the Turing Test. That prize remains unclaimed.

Faking Intelligence

Of course, AI has made great progress, but usually in more restricted settings and focused on specific problem solving. To date, AI has beaten the world’s best chess and Go players. It has also, in the form of IBM’s Watson, famously defeated Jeopardy! champion Ken Jennings.

One could argue that these examples are more a testament to brute-force computing, wherein thousands of processors are mechanically scoring possible moves in a game. Or in the case of Watson on Jeopardy!, robotically searching massive databases for keywords, and rating possible answers.

Deep Blue is a supercomputer with hundreds of processors specially designed to evaluate chess positions.

(Credit: Jim Gardner, via Wikipedia)

In 1950, Turing also developed and coded chess playing software. Although the computer to run it hadn’t been completed, his use of special search techniques to evaluate the chessboard form the basis of AI techniques used to this day. Similar algorithms are even found in IBM’s chess playing machine Deep Blue, which forced grandmaster Gary Kasparov to unceremoniously resign back in 1997.

Chess software is smart in a limited way, and can even fool world-class players into believing they’re dealing with a human opponent. Kasparov felt that playing Deep Blue was like challenging a player who was subtle, intuitive, and very human. As if having anticipated the chess grand master's insight, Turing also thought that great AI software should make intentional mistakes to appear more human. In the case of Deep Blue, this idea may have inadvertently led to its victory. A simple and unintentional bug in Deep Blue’s code is thought to have produced a random chess move: It flustered Kasparov just enough to make him feel he had been outplayed, ultimately leading to his defeat.

Eliza: AI’s First Psychotherapist

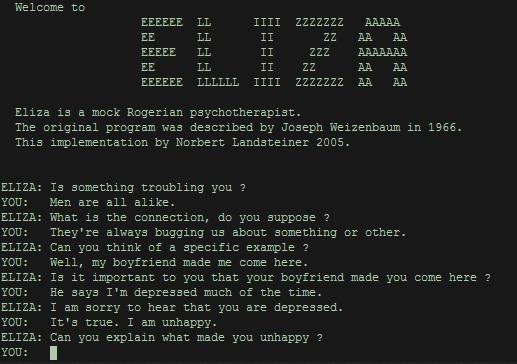

A computer’s ability to deceive has been understood since the early days of AI. In 1966, legendary MIT computer scientist Joseph Weizenbaum developed a natural language processing program called Eliza that simulated a Rogerian psychotherapist. (For the unanalyzed, that’s a form of therapy in which patients run the show, so to speak, while the analyst steps back.)

The Eliza program was essentially the first chatbot, and Weizenbaum began to notice his staff confiding in his software. Weizenbaum knew that Eliza was merely taking the patient’s text input and turning statements into questions and making other variations on the underlying grammar of the input.

Eliza didn’t understand its interactions with humans anymore than Watson understood the Jeopardy! answers. Both programs were applying algorithmic rules and using the power of computing machinery to respond quickly. Below is a sample session with Eliza. You too may find yourself comforted by her helpful advice.

Eliza’s simple algorithm has convinced many they’re talking to a real therapist. (Source: Wikimedia Commons)

This ability to simulate insight and wisdom deeply troubled Weizenbaum, leading him to reject this false tech god. He became more famous as an AI skeptic and ultimately one of its harshest critics. His book Computer Power and Human Reason was a 1970s best-seller that warned against computers and software. Weizenbaum feared that humans would rely too much on their interactions with machines, replacing its judgments with those we obtain in ways that a machine could never know – through human creativity, imagination, and intuition. As Weizenbaum noted, “No other organism, and certainly no computer, can be made to confront genuine human problems in human terms.”

Machines That (Kind of) Learn

Anyone who has even the most minimal interactions on the Web has likely experienced some form of AI – or as its been rebranded, “machine learning.” E-commerce sites routinely provide its users with AI-based suggestions for books, restaurants, furniture, and even movies they might be interested in.

Known as recommender systems, these algorithms are highly dependent on enormous data sets that track consumer purchases across a Web site. The software guesses or “learns” about a target user’s preferences by comparing transactions and browsing history to other users. It finds those who are most similar in tastes, basing its recommendations on what they have purchased or browsed.

This technique is called “collaborative filtering” by the machine learning experts; they’re using the wisdom of the crowd to filter or tune the suggestions. For example, suppose as a subscriber to an online bookstore you’ve just bought a few books by horror writer Stephen King. The algorithms might then examine all users who’ve purchased or clicked on King literature, and then decide from their purchase history that H.P. Lovecraft stories or, perhaps, more recent work by Anne Rice would nudge you into another transaction.

Ubiquitous recommender systems, alas, have been found to have a bias towards popular items. It’s the downside of this crowd-based approach: The popular items are found in more users’ preference lists, and will tend to crowd out lesser-known products. Software-driven suggestions on e-commerce sites can also become a self-fulfilling prophecies: Books and movies become popular because the algorithms say these are the ones you should be reading!

Like many video sites, MagellanTV displays titles we think will interest our viewers. Are recommendation algorithms better than human-curated suggestions?

Collaborative filtering software is an example of what AI scientists call unsupervised machine learning: The software is able to decide for itself, without human help, the items the user will most likely purchase. This contrasts with supervised learning, where the software is first provided with sample data sets that had been previously categorized by its human teachers – training it with, say, curated lists of cookbooks or film-noir classics.

Biased Machines and Their Human Trainers

In supervised learning, the data set acts as a teaching environment for the young learning software. Unfortunately, it can be taught a different kind of bias.

If the training data are not carefully selected, the human classifications are skewed, or the algorithms are not carefully reviewed, we may be in the situation that Joseph Weizenbaum and other AI skeptics had feared. The algorithms dispense an answer that is blindly accepted by the humans who believe in the infallibility of a silicon brain even when it’s clear the software is far from perfect.

“Apple Card is a sexist program. It does not matter what the intent of individual Apple reps are, it matters what THE ALGORITHM they've placed their complete faith in does. And what it does is discriminate.” —Tech entrepreneur David Heinemeier Hansson

There are more than a few unsettling stories these days of machine learning algorithms coming up short. One notable incident involves Apple co-founder Steve Wozniak. He discovered that he had ten times his wife’s borrowing limit on his Apple credit card, even though they share banking accounts and file joint tax returns. Thankfully, Wozniak was able to fight back and tweet about this algorithmic absurdity leading to gender bias.

This is not to say that the developers and the companies behind these algorithms are necessarily biased. If the training data are not representative of the customers, then the software is taught to discriminate: skewed data set in, bad decisions out.

A few years ago, Amazon embarked on an AI-based hiring tool that would avoid the messy and too often biased process of humans in HR reading résumés and deciding whether to contact a job applicant. For a training dataset, Amazon used its own 10-years of hiring data, which was mostly of … male candidates.

The machine learning software was taught to attach more weight to aggressive language like “execute” and other biz-words found in résumés. However, women candidates used these words less often, and so the machine learning algorithms effectively discriminated against them.

To its credit, Amazon recognized the issue and ultimately scrapped the project. This does hold lessons for companies that rush to replace humans with poorly thought-out AI solutions. It’s not that AI can’t perform almost as well as workers in certains tasks. But as we’ve seen, we are all too willing to ascribe human qualities of wisdom and insight when interacting with silicon-based computing devices. The larger issue is that the humans who configure, monitor, and adjust AI applications should first understand how the algorithms work and their limitations. And then have the confidence to override bad AI decisions.

Ω

Title Image: Hands of Robot and Human Touching on Big Data Network Connection Background by ipopba via Adobe Stock.