Some people are excited about the possibility of conscious computers, while others recoil in horror. But what does it mean to say that a computer could be conscious? Spoiler alert: Philosophers don’t agree on an answer!

◊

I know you are reading this article. Perhaps you are squinting into the glow of a cell phone screen – or maybe a desktop monitor. No! You’re holding a tablet or peering intently at a laptop display.

What I don’t know is which description is correct. Nevertheless, I assume at this very moment you are having an experience. That’s because you are the sort of creature that senses, perceives, feels emotions and desires. You’re the sort of creature that thinks, wills, reflects, believes, and understands. You’re the sort of creature that projects itself into the future and then pursues goals. And I also assume that the experience you’re having right now is something like mine. I believe, in short, you are conscious.

For a fascinating deep dive into the relationship between AI and our natural brains, watch AI vs. Human Brain: The Final Showdown.

But what, exactly, does that mean – just what is consciousness? Is it just that bundle of cognitive and emotional capacities listed above? And even if I am confident that I’m conscious, why should I believe you are? Why should I believe anything else is conscious?

According to computer scientist Blake Lemoine, there’s a Google chatbot that’s got the feels – in short, it’s conscious. This artificial intelligence (AI) interface called LaMDA (Language Model for Dialogue Applications) is supposedly as emotionally sophisticated or competent as an eight-year-old, and intelligent enough to know physics.

I Know I Am, But What Are You?

“I think, therefore I am,” René Descartes reasons in his Discourse on Method. According to Descartes, “I” am fundamentally self-consciousness. To deny that I exist requires my existence – specifically as a thinking thing. Hence, as Descartes concludes in another work, “this proposition, I am, I exist, is necessarily true whenever it is put forward by me or conceived in my mind.”

For Descartes, to be “me” is to be a mind, an immaterial thing – a soul – whose essence is thought. What it is to be me, in other words, is to be a thinking thing; my body is something else altogether. More specifically, thinking is always first-person singular. All my thoughts are…mine. My memories, my feeling confused or delighted, my seeing letters and words appear on the laptop screen as I type – “I” belong to what consciousness is. And Descartes thinks, as do most of us, that an artificial intelligence has no “I.” In fact, Descartes concluded that non-human animals are mere automatons – unthinking machines, not substantively different from a computer.

Edward “The Tadpole” Pibbles, a pit bull mix. Descartes believed animals were “non-thinking,” essentially machines since they appeared to him to lack consciousness. (Credit: Mia Wood)

If you’ve ever spewed a bunch of expletives and obscenities at Siri, for example, you quickly conclude the well-mannered AI isn’t self-conscious. Siri does not exhibit indignation or appear to take offense when you rant and rage, feel pride at your gratitude, or giggle over a joke. (And what does it say about us that we feel anger, gratitude, and playfulness toward a machine?) The fact is, Siri can’t engage – Siri can’t care about what you and I say. Instead, the AI is programmed to sift through loads of text and identify patterns to predict the appropriate response.

What about Eddie, my dog? He doesn’t seem to feel offended or indignant, either. Does that mean he’s not self-conscious? Probably. Nevertheless, Descartes is wrong to think that creatures like Eddie are machines lacking consciousness altogether. Indeed, many of us believe that non-human animals have varying levels of consciousness, which philosopher Thomas Nagel characterizes as “the subjective character of experience.” Thinking about what it means to have experience opens another way into the question of whether artificial intelligence is or will be real in the way you and I are conscious.

The Ghost in the Machine: Does Mind-Body Dualism Exist?

Suppose we conclude that Descartes is wrong about minds, too. Suppose there aren’t two kinds of things in the universe – there aren’t immaterial minds and material bodies. Only material things exist.

Humans and machines are material things. The difference is that humans have specific features or properties that machines don’t. So, even though we aren’t composites of mind and body – there is no ghost in the machine – human beings have the capacity for thinking in a way that machines do not.

Philosopher Gilbert Ryle called Descartes’s mind-body dualism “the dogma of the ghost in the machine.”

Descartes’ dualism may actually distract us from investigating whether non-humans are conscious. In other words, by severing reality into two – the immaterial and material – Descartes eliminates the theoretical possibility of conscious computers. If, on the other hand, we suppose that there is no immaterial or transcendental reality, we are left with material things, at least some of which are conscious. What other conscious, material things are there? (Besides Eddie, of course.)

The Turing Test Separates the Humans from the ‘Bots’

Remember that, according to Google computer scientist Blake Lemoine, at least one AI, LaMDA, is conscious. “It doesn’t matter whether they have a brain made of meat in their head,” Lemoine put it in a Washington Post story. “Or if they have a billion lines of code. I talk to them. And I hear what they have to say, and that is how I decide what is and isn’t a person.” What does that mean?

The 20th-century British mathematician Alan Turing proposed a thought experiment to determine the answer. In the “imitation game,” one person tries to determine which of two other participants is a computer and which is a human. One participant plays the game by writing questions like, “Do you play chess?” The role of the other human is to help the questioner make the correct determination, while the role of the computer is to fool the questioner into mistaking it for a human.

The Imitation Game is a 2014 biopic starring Benedict Cumberbatch as Alan Turing. It is based on Andrew Hodges’s 1983 biography, Alan Turing: The Enigma.

The Turing Test, first proposed in 1950, became the standard for attempts to answer the question, “Can machines think?” In one sense, given that humans created machines, the answer is clearly yes. After all, machines – or at least, the programs that run on them – are made to compute, to calculate, to draw inferences.

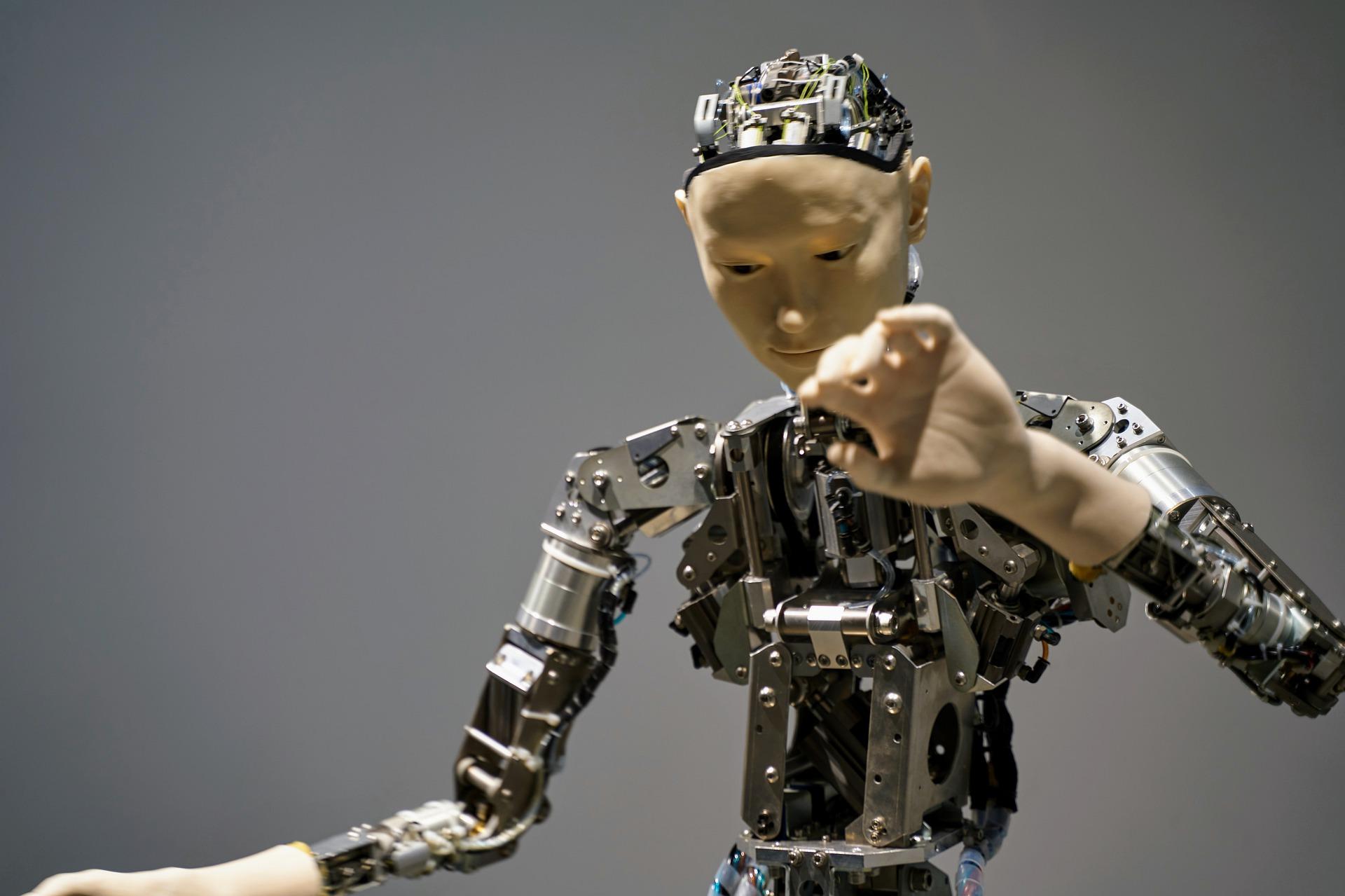

(Credit: Gerd Altmann, Pixabay)

These are thinking activities, but whether they reflect cognitive capacities is another matter. It’s not clear that running a computer program is equivalent to the sort of mental aptitude you and I have. Such programs don’t even reach the low threshold of consciousness exhibited when we simply navigate our world without also reflecting on it, as we do when groggily making the morning coffee on autopilot.

To help us think about what’s going on here, consider the following question: Have you ever had the discombobulating experience of trying to make sense of something you see or hear – something that, however momentarily, confuses you, so you can’t make out what’s happening? You see an object in the distance. It looks like a cat but doesn’t move like one. You’re flummoxed until you realize you’re seeing a raccoon.

That moment reveals that your cognitive structures contribute to creating the thing you call experience. Your confusion illuminates what you take for granted as a matter of daily reality is actually the result of rather complicated processes to which we don’t (or can’t) pay attention. We just do our thing, generally believing that experience comes to us complete or intact. The fact that we can take an intellectual step back to reflect on what the cat-raccoon glitch teaches us about cognition leads us to an interesting thought about experience itself.

Rocks don’t experience anything, but Eddie does. You and I do. “Experience” means there is something it is like for me to be me, even when I’m on autopilot making morning coffee. So far as we can tell, there is nothing it is like for an artificial intelligence to be an artificial intelligence. In other words, a computer doesn’t experience anything – remember Siri’s dispassionate response to your hypothetical diatribe? That’s because AI does not have a point of view.

‘The Chinese Room’ Separates Information from Understanding

Suppose you are in a room with a box of standard Chinese symbols and a rule book in English. You do not know Chinese, but no matter. Your job is to match one set of Chinese symbols to another by following the rules written in English. It could go something like this: “Take the symbol, x, and place it immediately after the symbol, y. Now take the symbol, z, and place it immediately before the symbol, x.

What you’re doing, according to philosopher John Searle, is simply manipulating symbols or processing information. That’s just what the Turing computer does. You don’t understand Chinese, and Turing’s computer doesn’t understand the symbols it’s given. It just processes information in a stepwise fashion. To someone who knows the Chinese symbols, however, sense has been made, and it looks like you know Chinese!

Remember creepy, malevolent Hal from 2001: A Space Odyssey? Or, more recently, do you recall polyamorous Samantha from Her, and disturbing Ava from Ex Machina? Yet there are plenty that delight us. Consider two fictional cutie pies, R2D2 in Star Wars and WALL•E. And Robbie, from Isaac Asimov’s I, Robot, inspires our sympathy.

Computers simply don’t have the “wetware,” as Searle puts it – they don’t have the cognitive capacities for consciousness as we know it. If we believe that consciousness is something neurobiological (not something in addition to that material stuff), and we know that machines are not biological things, then machines aren’t conscious. Lacking the requisite material elements, computers can’t evolve consciousness. (But animals can!)

Of course, AI can perform some tasks as well as humans. That’s not surprising, given that we humans made machines, the architectures of which support some of the same functions and patterns natural to humans. Moreover, we constructed them in ways that leverage brute computing speed and power. For example, AI can count and do arithmetic faster and more accurately than humans. This does not equate, however, to the sort of understanding that you and I have when we laugh at a joke. It’s misleading, then, to use language like “learning” in “machine learning,” and “neural” in “neural networks,” as computer scientists do when talking about artificial intelligence. (Maybe we should revisit “intelligence,” as well.)

Lions and Tigers and Bears? Oh, No! It’s Philosophical Zombies

There are lots of behaviors you and I share with each other, with non-human animals – and with computers. These behaviors can be explained entirely in terms of physical mechanisms and states. Consider, for example, the fact that we can explain how electrical impulses function in the machinery of a biological organism or a computer. Or contemplate the fact that pain is just a group of nerve fibers firing.

Next, let’s classify any mechanical behaviors that imitate a human as those of a zombie, a thing that apparently does everything you and I do, except it does so without consciousness. It can talk about experiences, create poetry, and even laugh when tickled. In short, a zombie has all the brain states and functions you and I have, but it lacks consciousness.

(Credit: Gerd Altmann, Pixabay)

But if that’s true, then (human) consciousness is not identical with brain states and functions. What, then, do we do with the claim that consciousness is nothing other than brain states or functions? As philosopher David Chalmers puts it, “There is nothing it is like to be a zombie.” So also, in this view, there is nothing it is like to be a computer.

See, if some computers are conscious, or have the capacity to become conscious the way that I am, or even the way I’m convinced my dog is, surely there is a feature common to all three. If computers aren’t conscious and can’t be made so (or we wouldn’t want them to be), then, as philosopher Daniel Dennett tells us, an AI is “competent without comprehension.” There aren’t any philosophical zombies, since such creatures simply don’t exist in a world that follows a specific set of natural laws – our world, as it happens. Zombies may be logically possible, but not physically plausible, and Dennett argues that consciousness is physical.

The real question, for Dennett and others, however, is whether we should pursue creating conscious artificial intelligences. Do we want a world in which Isaac Asimov’s three laws of robotics are put to the test?

Hey, Siri…? Alexa…? How do I turn this thing…

Ω

Mia Wood is a professor of philosophy at Pierce College in Woodland Hills, California, and an adjunct instructor at various institutions in Massachusetts and Rhode Island. She is also a freelance writer interested in the intersection of philosophy and everything else. She lives in Little Compton, Rhode Island.

Title image source: Tumisu, pixabay.com