Visual art is routinely incorporated into datasets that inform AI. But has AI muddied how we think about authenticity in art today?

◊

Since its inception, I’ve been hesitant to use AI. There’s no denying that artificial intelligence is incredibly powerful, but when I hear about artificially generated images winning local art shows and being selected as the cover art for national magazines, the artist inside me recoils.

Artists have been taking inspiration from other artists, well, forever. However, when it isn’t made clear who (or what) created a work of art, it’s natural that we should question whether or not it still qualifies as art. Academic study of art almost always involves a discussion about materials used and the subject matter that is depicted. Often, the deepest realizations come through examination of a piece’s authenticity, historical context, and ownership.

Today, though, when AI can generate so-called artworks based on just a few prompts, replicating an artist’s style and subjects is easier than ever. In fact, AI learns from human artists’ creations to be able to learn to create its own. Artists and writers are expected to cite sources and build off, rather than steal, the ideas of others. When a copycat has no agency, let alone a name, it’s hard to draw bright moral lines that we paint so easily when thinking about conventional art forgery.

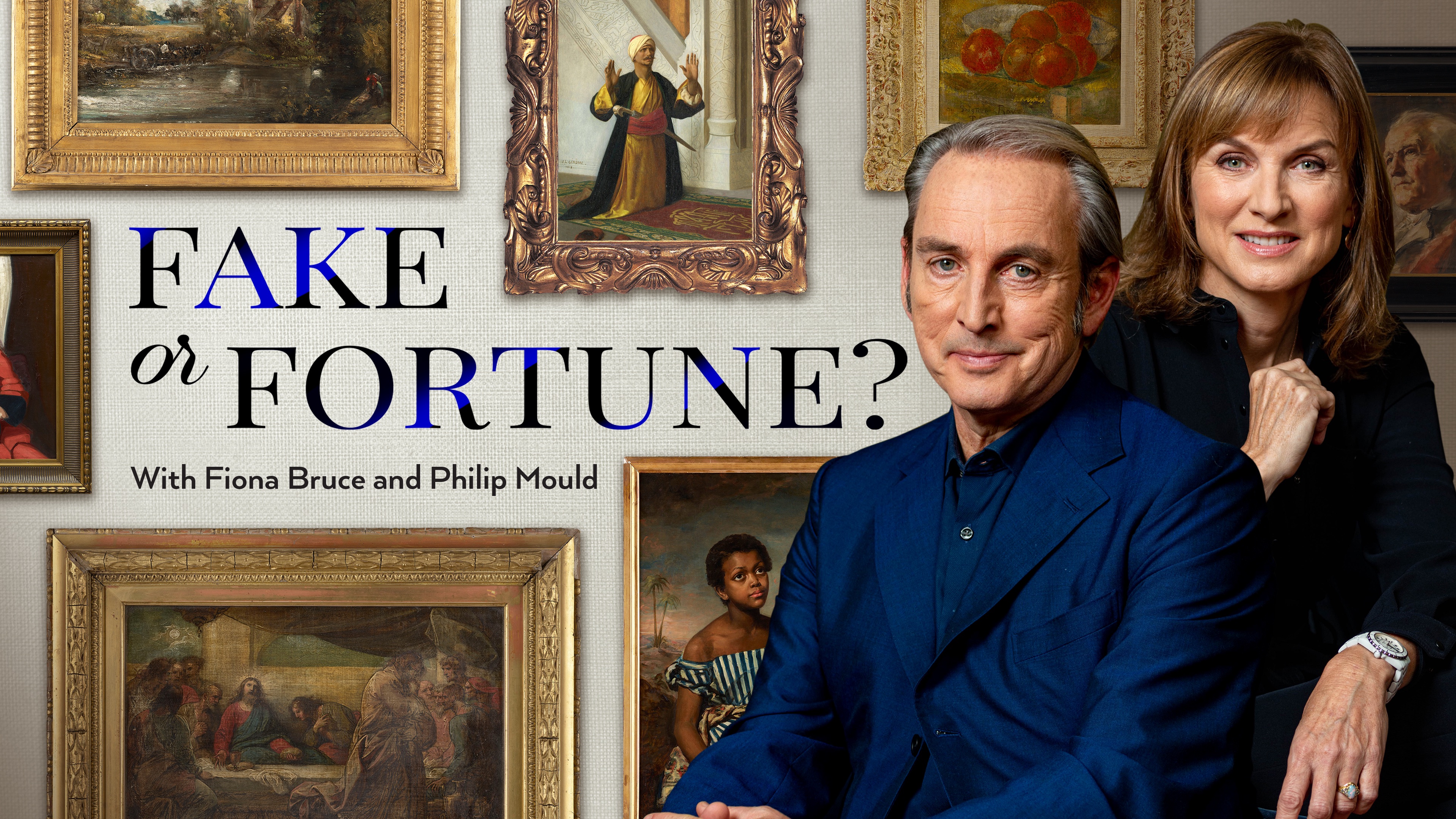

For more on art forgery, check out the MagellanTV series Fake or Fortune.

Famous Forgeries

A finished work of art is not just the object that spectators encounter on the wall of a museum or gallery. So much of what gives art cultural meaning resides in its materiality, its subject matter, the context in which it was created, and the forward-thinking of its creator. More fundamentally, people value art for what it makes them feel. I’ll resist diving deeply into aesthetics and just ask that you take a moment to recall an artwork that impressed you. We see so many images and objects everyday that for something to lodge itself into your memory, some deeper process must be at play.

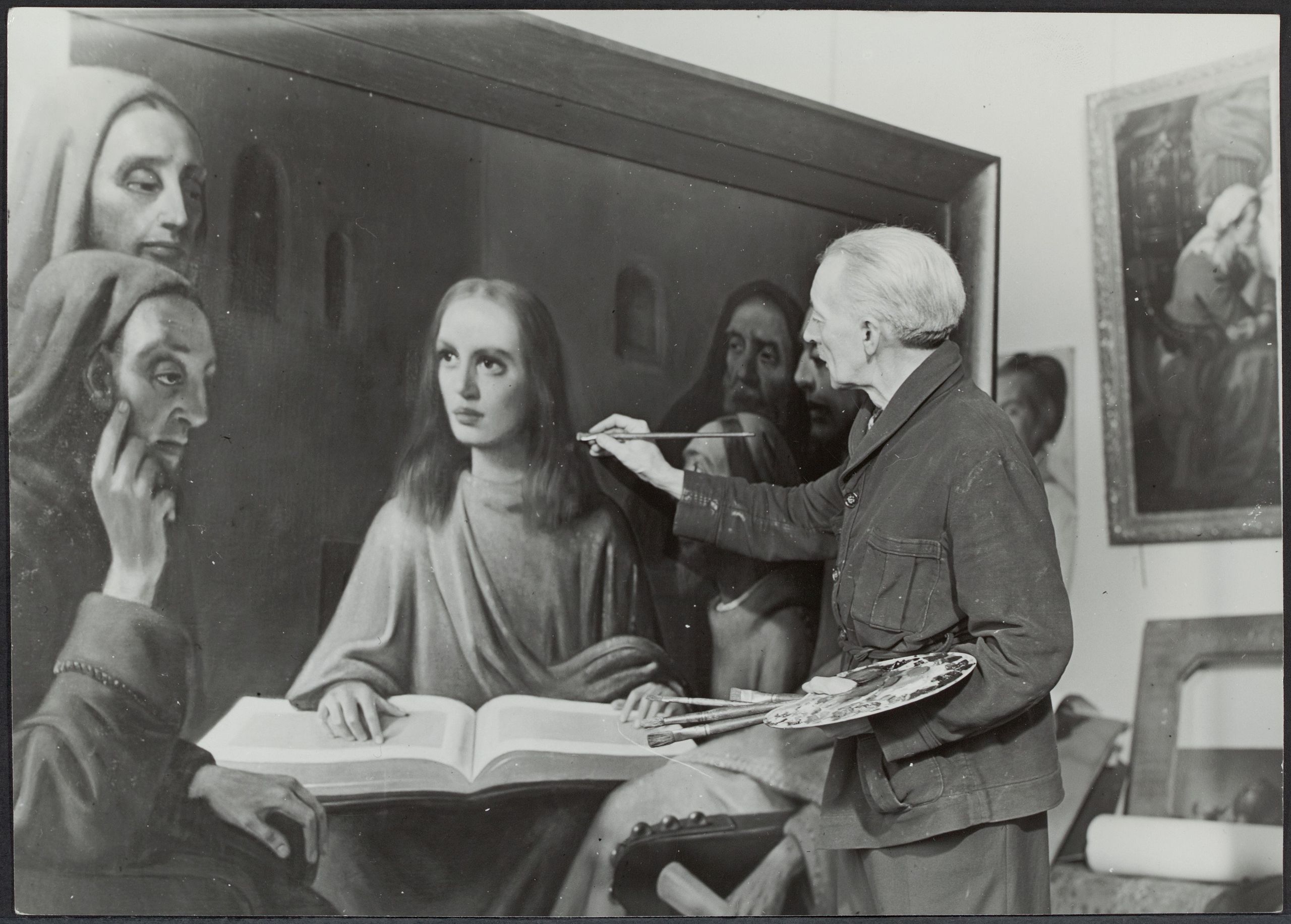

This memorable moment of engagement with a work of art is what art forgers struggle the most to replicate. Art historians determine the authenticity of a work of art by chemically testing its materials and studying how its composition aligns with other pieces created by a particular artist at a given time. There’s both art and science to sniffing out a forgery, but that detective process also gives forgers a blueprint from which they can essentially reverse engineer famous creations.

Infamous forger Han van Meegeren “painting” a fake Vermeer, October 1945 (Credit: Koos Raucamp (ANEFO), via Wikimedia Commons)

Forgers have made names for themselves imitating some of the world’s most recognizable visionaries. Paintings credited to Rothko, Picasso, Vermeer, and Van Gogh – often selling for unbelievable sums – have found their way onto the walls of museums. Some art historians estimate that as much as 20 percent of works currently in museums’ collections might soon be reattributed to different artists – or even to skilled fraudsters. Forgery has been around as long as art has been revered; and for exactly as long, the act of forgery has been considered a crime and condemned by society.

In recent years, the age-old art of forgery has transformed with the help of image-generating artificial intelligence technologies. Computer programs can replicate artists’ styles, techniques, and subjects with mathematical accuracy. AI image generators are creating original works based on what the software has learned by studying the styles and subjects of real artists’ works.

The groundbreaking 3D-printed oil painting, The Next Rembrandt, showcases AI’s ability to replicate the technical skill of one of the world’s great artists. Without any artist’s touch, this project was sketched up by a marketing company, fabricated by a 3D printer, and funded by a Dutch bank.

The Next Rembrandt (Credit: ING Group, via Wikimedia Commons)

The future of forgeries might be rooted in technology, and differentiating what is made by hand versus generated by AI will be harder and harder to detect. While many bask in awe at the potential of AI, I’m still grappling with questions about who owns what and what that might mean for the future of art. Borrowing ideas is a given with art and, by extension, forgeries, but when a non-human agent is the creator in question, can you even accuse them of “stealing”?

Artificial Intelligence At-Large

Artificial intelligence can generate images in a self-referential process where a computer program produces novel responses based on what it has “learned” from massive datasets. Some programs can not only analyze text but also photos, videos, and audio files in programs called multimodal large language models. These models churn out images and video files based on the "study" of human-created art. The AI systems demonstrate some knowledge of representation, composition, color theory, and design.

First physical magazine cover created by an artificial intelligence (Credit: DALL·E 2, Public domain, via Wikimedia Commons)

OpenAI introduced the public to this radical technology in a program called DALL-E. With just a few words, anyone can generate an image with a specified subject, setting, theme, color-scheme, layout, etc. If any aspect of the image that DALL-E generates doesn’t align with what you were looking for, you simply re-input your commands, modify the language or add more parameters. In some ways, AI-generated art is like a collage, but rather than specifically copying and pasting from pre-existing art, AI incorporates patterns, color theories, and hierarchies of composition.

OpenAI’s DALL-E is a portmanteau of one of Pixar Studios’ titular characters WALL-E and the Spanish painter Salvador Dalí.

Like a young child in an art class, these computer programs learn about abstract concepts such as form, design, color theory, and storytelling, then study examples of those principles being applied. Similarly, by studying how other people apply abstract ideas, artists learn how they can translate ideas into images. With millions – if not billions – of images in its dataset, AI has an edge over humans. It can study and learn more than a person could ever have time or the mental capacity to. For all the ways it has a leg up on people, though, AI comes with one big caveat – it can’t explain itself.

AI Efficiency vs. Human Authenticity

In some ways, AI is doing the same thing as artists – not just in terms of content creation, but in how it challenges the norm and raises existential questions. Generative AI’s creations could be considered as culturally subversive as Edouard Manet’s Luncheon on the Grass (Le Déjeuner sur l’herbe) or Andy Warhol’s Campbell’s Soup Cans – both of which borrowed the foundations of widely-recognized images to construct new ones. We can only imagine how those visionaries might have harnessed AI to generate art were they alive today.

AI uses technology to make a comment on culture and on art itself, but all of the images it generates exist under the veil of artificiality. After all, every human artist begins their journey with a blank canvas in some capacity, whereas computers have to be fed prompts and explicitly instructed to embark on the creative process. These images aren’t invented or dreamed or loved. Computers don’t (yet) have the ability to think critically or to design with intentionality to impress a certain point of view – as AI generated content with opinions is just a byproduct of skewed datasets.

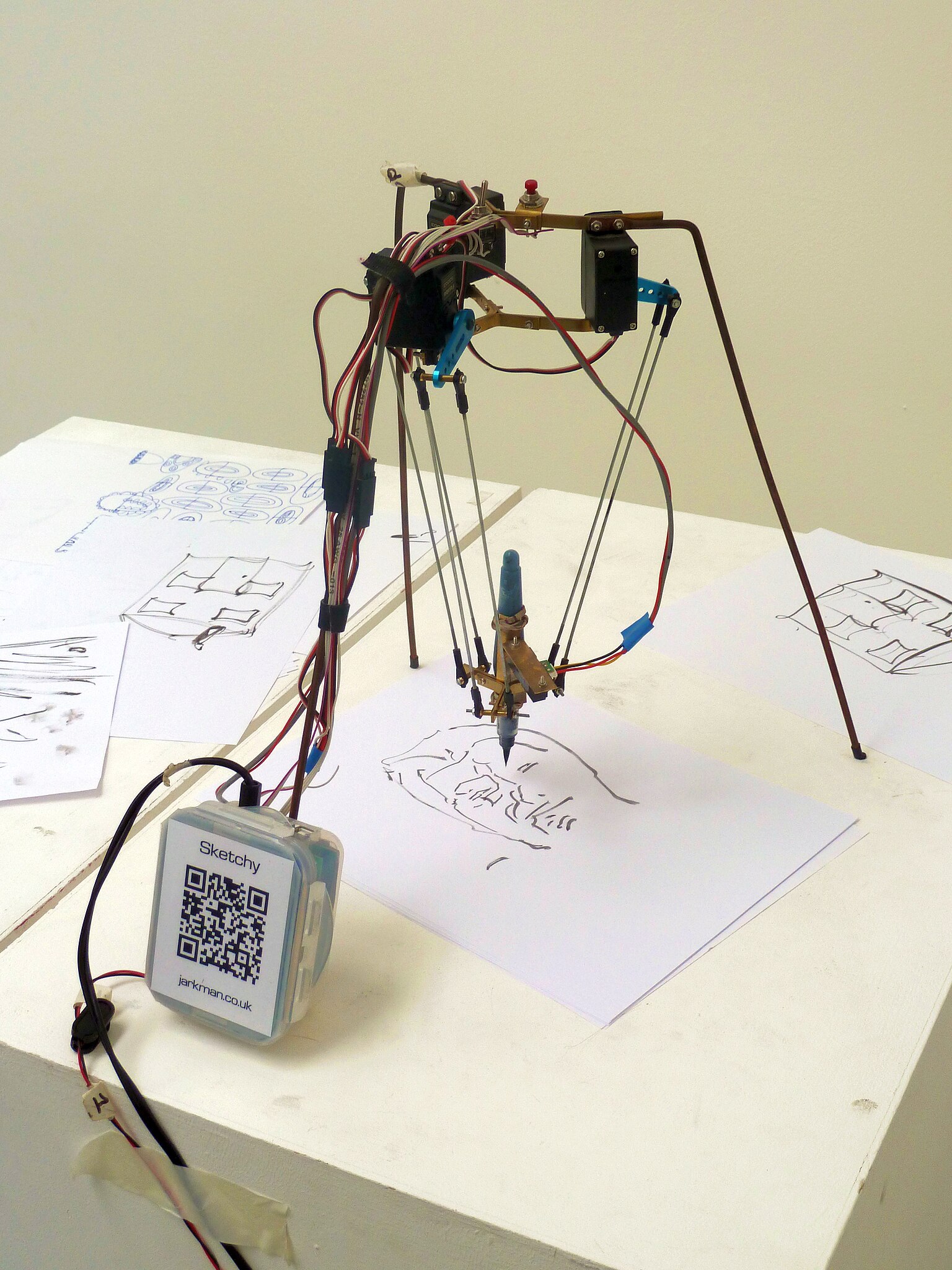

Sketchy, a portrait-drawing delta robot (Credit: Andy Dingley, via Wikimedia Commons)

In the world of design and visual art, AI-produced images have appeared on the covers of magazines and made their way into movies, generating extras in the backgrounds of crowded shots. Websites like Etsy and Fiverr, known for connecting freelance artists and creatives with contract work, are saturated with computer-generated images rather than artist-created work. Image generation takes a fraction of the time that an artist would expend to produce a similar work. Why pay a graphic designer to draft four versions of something on Photoshop when you can spend four minutes typing words into DALL-E to get exactly what you want?

Perhaps this is a natural transition to the future of the art industry. Amid the radical changes wrought by the Information Revolution, it seems that nearly every element of society has at some point had to reckon with technology replacing some human activities and jobs. The issue with artificial intelligence ousting artists is that AI could not exist without their work and needs it to evolve. AI is only as creative as the human artists whose work it copies and adapts. But when artists run out of work and stop contributing their own ideas to the machine, will AI stop evolving?

Language Games with Language Models

In the age of AI, words like “intelligence” and “creativity” have taken on new meanings. The Merriam-Webster dictionary recently published an addendum to the definition of the word “intelligence” to include “the ability to perform computer functions.” While an artist’s signature used to be a sacred stamp of authenticity, artificial intelligence has enabled anyone in the world to run amok with this incredibly powerful tool, making it possible for just about anyone to type a few key words to produce something that could be passed off as “art”. Moreover, AI can also take the subversive possibilities of art way too far: Deep Fake videos of politicians relaying lies and pornography with superimposed faces goes to show that deployment of AI isn’t all well-intentioned and creative.

Perhaps I am too protective of the word “creativity,” but I’ve always understood the word to be more about processes of thinking, rather than the novelty or catchiness of its products. As artificial intelligence fundamentally changes how businesses work with creatives, perhaps we all ought to reflect on our sentiments toward art forgers. As a society, we generally tend to look askance at people who plagiarize or fail to acknowledge others’ whose work informed theirs. Legally, forged artwork that is sold is a crime when attributed to someone who didn’t create it; yet we embrace AI with open arms.

AI-generated image using the prompt “Magellan TV,” by DALL-E, 2023 (https://openai.com/dall-e-2)

Some artists have been using AI as a part of their creative process; but for specialized artists like illustrators and concept artists, digital image-generating algorithms are eliminating the need for their highly-specialized crafts. The U.S. Copyright Office will protect AI-generated works with verified human authorship, but people must disclose their use of AI. On the other hand, when DALL-E whips up an image, there is no asterisk crediting the artists from whom it learned how to produce it. After all, it’s just learning from artists, not stealing. Right?

Ω

Daisy Dow is a contributing writer for MagellanTV and freelancer. Originally from Georgia, she went to college to study philosophy and studio art. She now works in Chicago as a media relations coordinator.

Title Image: Man in front of multiple art prints (Credit: Beata Ratuszniak, CC0, via Wikimedia Commons)